Recent News

Outlever turns companies into the voice of their industry by building owned media ecosystems through brand newsrooms.

© 2026 - All Rights Reserved

Every AI lab is losing money serving your company right now. They know it. And they are doing it on purpose.

Every AI lab is losing money serving your company right now. They know it. And they are doing it on purpose.

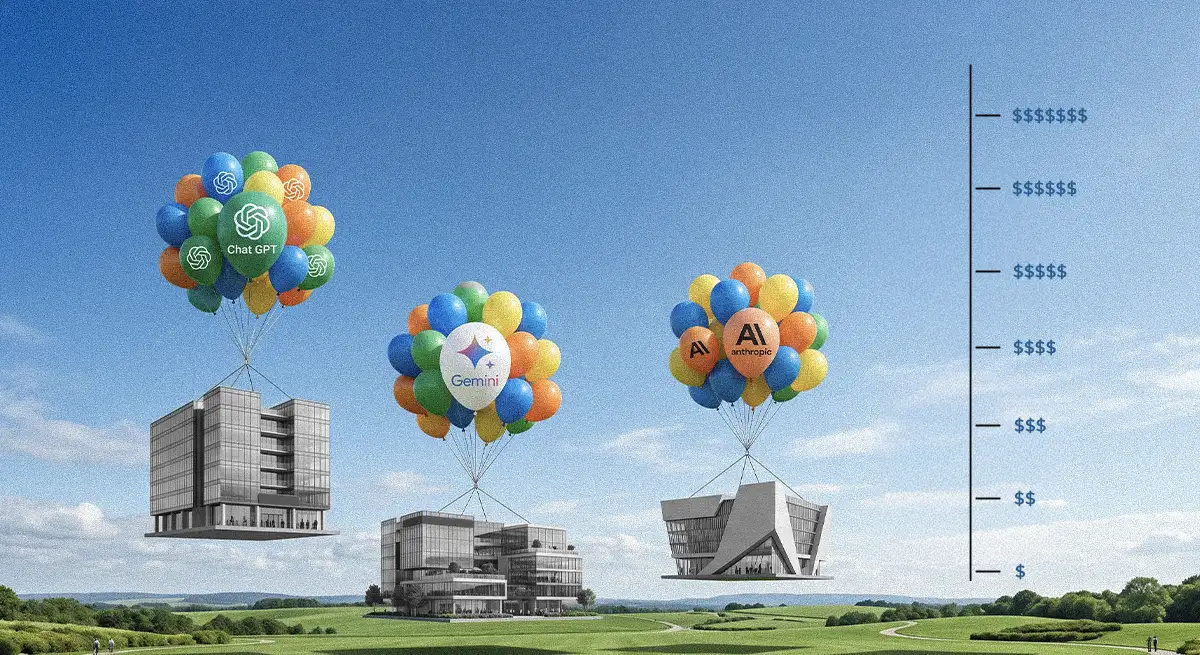

OpenAI, Anthropic, Google, and the rest are running an industry-wide loss-leader program at a scale that has no precedent. They are selling enterprises filet mignon at gas station hot dog prices and calling it a business model. The gap between what your company pays for AI subscriptions and what it actually costs to serve those seats is not a rounding error. It is a gulf. And every organization that has built workflows, products, or entire business units on top of these subsidized prices is standing right on the edge of it.

This should be front of mind for every CTO, CFO, and head of operations reading this. Because when the pricing corrects, and it will, the companies that treated AI as a permanently cheap utility are going to wake up to bills that make their current SaaS spend look quaint.

Pull out the napkin. This matters.

Claude Pro costs $20 a month. For that, you get access to Sonnet 4.6, Opus 4.6, web search, code execution, file creation, and roughly 5x the usage of the free tier. On the API side, Sonnet 4.6 costs $3 per million input tokens and $15 per million output tokens. Opus 4.6 runs $5 input and $25 output per million tokens.

A knowledge worker running a few hours of Claude daily, uploading documents, drafting reports, analyzing data, can easily burn through several million tokens per week. At API rates, that same workload runs somewhere between $200 and $400 a month per seat. Some power users push well beyond that. But on a Pro subscription, the company is paying $20 per head.

Anthropic is not the only one eating this cost. Microsoft was reportedly losing over $20 per user per month on GitHub Copilot. For power users, the compute burn was hitting $80 a month on a $10 subscription. One widely cited analysis found that Anthropic users were consuming upwards of $8 in compute for every $1 of subscription revenue. OpenAI's own VP of Product, Nick Turley, has described their subscription pricing as something they "stumbled into" and has floated the idea of phasing out unlimited plans entirely, comparing them to "unlimited electricity."

ChatGPT Plus has been $20 a month for three years. In that time, the models got dramatically more capable. The features multiplied. Image generation, code interpretation, voice mode, agentic reasoning, web search. And the price never moved. For enterprise buyers who locked in team or business rates during this window, the question is not whether they got a good deal. The question is how long that deal survives.

Every major provider is playing the same game with the same math.

Google offers Gemini Advanced at $20 a month bundled into Google One AI Premium while simultaneously charging developers real money for API access to the same models. Meta gives away Llama for free, subsidizing the compute cost of hundreds of millions of AI queries across its platforms entirely through ad revenue. xAI's Grok undercuts everyone on API pricing at $0.20 per million input tokens, a number that only makes sense if you assume the company is willing to hemorrhage money to buy market share.

The pattern is identical across the board. Price for adoption, not for economics. Lock organizations in. Make AI a load-bearing part of every team's daily workflow. Worry about the bill later.

For enterprises, "later" is arriving. OpenAI is losing money on consumer subscribers and is reportedly considering a strategic pivot away from its consumer bets toward a tighter focus on enterprise, where the unit economics are slightly less ruinous. The Wall Street Journal reported that the company missed key revenue and user targets in its sprint toward an IPO. The subsidy era is not winding down gracefully. It is showing cracks everywhere.

What made the subsidy math merely bad just became catastrophic. The reason is agentic AI.

When AI was a chatbot, you ask a question, it answers, token consumption was relatively predictable. A conversation might run a few thousand tokens. Heavy use might push into the tens of thousands. That was manageable at subsidized rates.

The agentic shift changes the equation completely. Claude Code sessions run autonomously for extended periods, burning through tokens at rates that dwarf conversational usage. Users have reported exhausting 5-hour rate limit windows in under 90 minutes. GitHub just announced that Copilot is moving to usage-based billing on June 1, 2026 specifically because the flat-fee model collapsed under agentic workloads. GitHub's own announcement acknowledged that Copilot has evolved substantially and that agentic usage "is becoming the default," which causes higher compute and inference demands. Sam Altman has said publicly that OpenAI now needs to become "an AI inference company," an acknowledgment that agentic usage requires a fundamentally different economic model.

For enterprise engineering teams, the implications are concrete. Agent Teams, multiple AI instances working in parallel on a single project, multiply the burn rate dramatically. A developer running three or four concurrent coding agents is not consuming 3x or 4x the tokens of a chat conversation. It is an order of magnitude more. And the subscription price on that seat has not changed.

This is where it gets ugly for organizations that have not done the work.

Over the past two years, thousands of companies have woven AI subscriptions deep into their operations. Marketing teams draft copy through ChatGPT Plus. Engineering teams write and review code through Claude Pro. Research teams synthesize documents. Customer success teams summarize tickets. Finance teams model scenarios. These are not experiments anymore. They are load-bearing workflows.

Most of these companies are budgeting for AI at current subscription prices. A team of 50 on Claude Pro costs $1,000 a month. The same team on ChatGPT Plus costs the same. At those prices, AI is a rounding error on the P&L. Cheaper than a single SaaS tool. Cheaper than a contractor.

But the equivalent API usage for that same team, if the company were paying the actual cost of the tokens consumed, would be somewhere between $15,000 and $40,000 a month depending on usage intensity. That is not a rounding error. That is a line item that needs its own budget code.

When prices adjust, and they will adjust, the companies that treated $20-a-month AI as a permanently cheap input are going to get hit with bills they did not budget for, at a time when the workflows are too embedded to rip out. The subsidy creates dependency. The dependency makes the price increase unavoidable. That is the whole trap.

The data backs this up. KPMG's Q1 2026 AI Quarterly Pulse found that U.S. organizations are projecting average AI spending of $207 million over the next 12 months, nearly double the figure from the same period last year. But a Goldman Sachs research survey found many large companies are already overrunning their AI budgets by orders of magnitude, with AI spending on pace to rival engineers' salaries in the near future.

And most organizations are not even tracking consumption properly. Swami Chandrasekaran, head of AI and data labs at KPMG North America, told Marketplace: "Even, like, a quarter, two quarters ago, nobody bothered about LLM consumption costs." Brian Jabarian, an economist at the University of Chicago who consults with companies on AI transformation, was more blunt: "The time for the bill is going to come."

There is a specific mechanism that will force the repricing, and it is already in motion.

Both OpenAI and Anthropic are preparing for IPOs. Anthropic has reportedly surpassed $30 billion in annualized revenue, up from $9 billion at the end of 2025. OpenAI is on pace for roughly $25 billion. These numbers look impressive until you look at the cost side.

OpenAI projects $115 billion in cumulative cash burn through 2029 and has committed to $665 billion in compute spending by 2030. Oracle took on $43 billion in debt in a single fiscal year to build data centers for OpenAI. The entire infrastructure behind these services is financed on the assumption that revenue will eventually cover costs. Right now, it does not.

When you are private and burning venture capital, you can subsidize inference. You can run models at a loss. You can offer $20-a-month plans that cost $100 or more to serve. An IPO changes the equation overnight. Public markets demand margins. Analysts demand unit economics. Investors demand a path to profitability that does not depend on infinite fundraising.

The moment these companies go public, the pressure to close the gap between subscription price and actual cost becomes existential. The fastest way to close that gap is to raise prices, impose usage caps, or shift to consumption-based billing. All three will hit current enterprise subscribers hard.

The repricing will not happen all at once, but the signals are already visible for anyone paying attention.

GitHub is moving to usage-based billing on June 1, 2026, replacing flat-rate premium requests with token-based AI Credits. Microsoft has raised Microsoft 365 prices twice in four years, with the latest round specifically tied to AI infrastructure costs. OpenAI has introduced a $100 Pro tier positioned as the new "real" price for heavy users. Anthropic's Max tier at $200 a month provides a preview of what committed usage will actually cost when the subsidies end. One by one, the floor is being raised.

As Geoff Webb, VP at Conga, put it: "This AI land-grab is on a colossal scale, and the price tag for dominating this new world is equally colossal. Monetizing the services and recouping some of that investment is going to force some pretty significant changes in business models and service pricing, and those changes are likely to happen fast."

The companies that survive this transition will be the ones doing the math today. That means auditing actual token consumption across teams, not just counting seats. It means modeling what AI costs look like at 2x, 5x, or 10x current prices. It means building vendor optionality into the stack so that no single provider's pricing change can blow up the budget overnight.

It also means having an honest conversation with the CFO before the CFO has it with you. Because the gap between what your organization pays for AI today and what it will pay in 18 months is going to be one of the most disruptive line-item increases most companies have ever absorbed. And the organizations that get caught flat-footed will be the ones scrambling to explain why a tool that used to cost less than a team lunch suddenly requires a six-figure annual budget.

The subsidy era is ending. The clock is running. And most enterprises have not even started the conversation.

The best editorial systems don’t happen by accident. Outlever builds them.

The best editorial systems don’t happen by accident. Outlever builds them.

Subscribe for the kind of thinking that makes people stop, read and come back.